Post-Mortem Report: Ethereum Mainnet Finality (05/11/2023)

Date: 2023/05/12

Authors: Nishant Das, Terence Tsao, Preston Van Loon, Potuz, Kasey Kirkham, James He

Status: Mitigated. Investigation complete. Prysm v4.0.4 released with fixes.

Network: Mainnet

Summary

On Thursday, 2023–05–11 around 20:19 UTC, Ethereum’s Mainnet network suffered a significant lack of block production which led to a temporary delay (4 epochs) in finalization. The same incident occurred the following day for slightly longer (9 epochs) and incurred an inactivity penalty. In both incidents, the blockchain recovered without any intervention.

Impact

Approximately 47 blocks were missed during the first incident which could be attributed to root cause. In the second incident, approximately 149 blocks were missing. When the lack of finality passed 5 epochs in the second incident, an inactivity penalty started to apply and quadratically increase each epoch. Each block should reward the producer with at least 0.025 ETH on average and the missing blocks represent a total lost revenue of 5 ETH for impacted block producers. However, the true lost revenue is likely much higher if builder bundle rewards are considered. If we assume that 65% of the validators were offline for 8 epochs with an inactivity leak, we estimate that a loss of 28 ETH was incurred in addition to approximately 50 ETH in lost revenue from missing attestations.

In total, we estimate that 28 ETH of penalties were applied and validators missed 55 ETH or more of potential revenue. This is less than 0.00015 ETH per validator.

No validator slashings were attributed to these events, although validators 48607, 48608, and 48609 were slashed slightly before and after the second incident. These slashings were likely due to operator error while switching clients or attempting a complex unsafe failover.

End-user transactions were minimally impacted. While there was a significant drop in available block space, gas prices did not increase higher than the daily highest gas price.

Root Causes

Some consensus clients, including Prysm, could not process valid attestations with an old target checkpoint in an optimal way. These specific attestations caused clients to recompute prior beacon states in order to validate that the attestations belonged to the appropriate validator committees. When many of these attestations were received, Prysm would suffer from resource exhaustion from these expensive operations and could not fulfill the requests of the validator clients in a timely manner.

Trigger

Many attestations voting to an old beacon block (that is a block from epoch N-2 during epoch N), both as head block and as target root, were broadcast. This is standard behavior of some CL clients (e.g. Lighthouse does this) when their Execution Client is not responding. These attestations, while valid, require a Beacon state regeneration to validate them. Prysm has a cache to not repeat these validations, but this cache was quickly filled up and this forced Prysm to regenerate the same state multiple times.

Detection

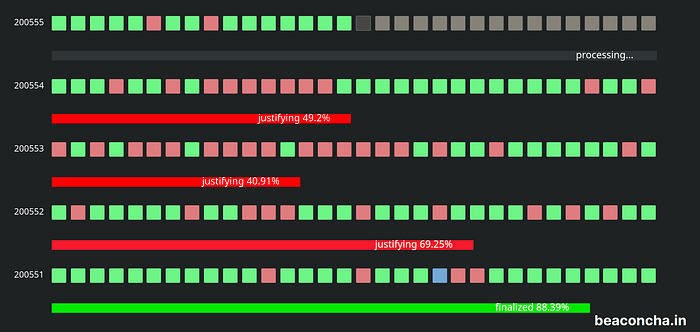

A significant drop in network participation was observed in epoch 200,551 and the chain temporarily stopped finalizing until epoch 200,555.

Another significant drop in network participation was observed in epoch 200,750 and the chain temporarily stopped finalizing until epoch 200,759.

What Went Wrong

- The network was failing to finalize due to missing blocks and attestations.

- Beacon chain clients had additional stress on the network caused by max deposits being processed.

- In Prysm, too many replays (

replayBlocksfunction) happen because we do not have a cache for replays. This was also what created all the go routines and CPU usage. Sometimes the same data would be replayed multiple times. Sometimes the replay is happening even before the first finished. Should be ignoring attestations at the previous epoch, and should be using head state. - There is a bug in Prysm: nodes that are connected to all subnets can be DOSsed by these attestations (it seems that all nodes are subject to this, probably except Lighthouse) as the cost of being resilient to forked networks. When the beacon state was smaller, Prysm would be able to handle these attestations and recover appropriately. However, with the large spike in deposits and the growing validator registry size Prysm was unable to recover this time.

Lighthouse opted to drop attestations in order to stay live, we opted to be able to keep many forks at the same time in order to be able to pick correctly a canonical chain in case the network is very forked. Lighthouse’s technique is better here because the network is not forked. They simply dropped attestations and followed the chain. If the network would have been forked, we would have been in trouble cause then Lighthouse would be contributing to not gossiping attestations. - Some client default attestation behavior is to produce valid blocks with old attestations when the execution client has issues or is offline. other clients may deal with this differently such as considering the situation as optimistic sync. This can create blocks with old attestations triggering the issues in Prysm and Teku.

Where We Got Lucky

- In the first incident, the duration of downtime was only 2 epochs reaching finality after 25 minutes. The second incident was also relatively short with only 8 epochs of symptoms. No mass slashings were reported in either incident.

- Client diversity helped the chain recover with some clients still being able to propose blocks and create attestations.

- Lighthouse dropped the problematic attestations and stayed alive.

- No manual intervention or emergency release was required to resolve the immediate issue finality issue.

Lessons Learned

- Testnets are not representative of the Mainnet environment. Goerli/Prater is only 457k validators while Mainnet is more than 560k and mass deposits are happening in Mainnet due to validator rewards restaking.

- Inactivity leak penalties work in Mainnet! 🎉

Fixes Implemented

- Use the head state when validating attestations for a recent canonical block as target root. This was the main bug! Prysm was regenerating the state for canonical slot 25 if used as target root on epoch 1 during slot 33.

- Use the next slot cache when validating attestations for boundary slots in the previous epoch.

- Discard any attestations that weren’t validated by the previous two rules and are for a target root that is not a checkpoint in any chain that we are currently aware of, and is not the tip of any chain that we are currently aware of (They will be processed if included in blocks).

- With the above rules, there are essentially no state replays that need to be done on Mainnet under normal conditions, and those attestations for old blocks (which are mostly worthless to the network) are just ignored.

Timeline (Approximate)

2023/05/11 Thursday (UTC)

20:06:47 — Epoch 200,551 begins, there is a missed/orphaned block and a few missed slots, and network participation drops to 88.4%

20:13:11 — Epoch 200,552 begins and there are more missed slots in this epoch, network participation drops to 69%

20:19:35 — Epoch 200553 begins. Gradually more and more consecutive slots were missed causing a total of 18/32 slots to missed and not finalizing the block. The most consecutive misses between slots 6417716 ~ 6417709. Network participation is at a low of 40%. It is clear that this isn’t just a Prysm issue as Prysm represents only 33% of the network.

20:29:00 — Prysm team is all hands on deck with an investigation of ongoing issues.

20:32:23 — Epoch 200555 starts and the network begins to recover without intervention.

2023/05/12 Friday (UTC)

17:20:23 — Epoch 200750 begins. Blocks are missing at an increasing rate and participation drops to 66.3%.

17:26:47 — Epoch 200751 begins. Only 13 out of 32 blocks are produced. Participation drops to 42.4%.

17:30:00 — “Mainnet stopped finalizing again”. All hands on deck.

17:33:11 — Epoch 200752 begins. Only 14 blocks are produced. Participation drops further to 30.7%. This is the lowest participation of any epoch in Mainnet ever.

18:17:59 — Epoch 200759 begins. 24 of 32 blocks are produced and participation is 81.7%. This epoch is the start of recovery.

18:24:23 — Epoch 200760 begins. 27 of 32 blocks are produced with 86.2% participation. This epoch restores finalization.